Get started with the community edition! If you want professional support, we offer subscription plans and custom licensing. Follow our Contributors Guide to learn how you can contribute. See the Operators Concepts documentation. There are various in-built Operators in Airflow for performing specific tasks like PythonOperator which can be used to run a Python function, SimpleHTTPOperator can be used to invoke a REST API and handle responses, EmailOperator used to send an email and to interact with Databases there are several operators like MySQLOperator for MySQL, Sqllit. Operators determine what actually executes when your DAG runs. Stackable Operator for Apache ZooKeeperĬontributions are welcome. An operator represents a single, ideally idempotent, task.Stackable Operator for Apache Hadoop HDFS.These are the operators that are currently part of the Stackable Data Platform: Kubernetes (for an up to date list of supported versions please check the release notes in our docs.We develop and test our operators on the following cloud platforms: Offering professional services, paid support plans and custom development. The Stackable GmbH is the company behind the Stackable Data Platform. All our operators are designed and built to be easily interconnected and to be consistent to work with. The data platform offers many operators, new ones being added continuously. Stackable makes it easy to operate data applications in any Kubernetes cluster. PythonOperator - calls an arbitrary Python function. Some popular operators from core include: BashOperator - executes a bash command. This operator is written and maintained by Stackable and it is part of a larger data platform. Airflow has a very extensive set of operators available, with some built-in to the core or pre-installed providers.

If you have a question about the Stackable Data Platform contact us via our homepage or ask a public questions in our Discussions forum. The documentation for all Stackable products can be found at.

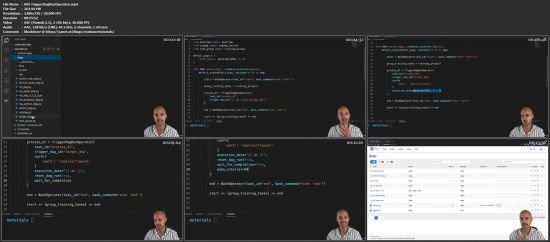

If you are interested in the most recent state of this repository, check out the nightly docs instead. The stable documentation for this operator can be found here. Read on to get started with it, or see it in action in one of our demos. Problem: The webserver returns the following error Broken DAG: /usr/local/airflow/dags/testoperator. from airflow import DAGfrom airflow.operators import PythonOperatorfrom import BranchPythonOperatorfrom import. You can install the operator using stackablectl or helm. Based on Kubernetes, it runs everywhere – on prem or in the cloud. Learn how to create incredible data pipelines and discover the most untold secrets of Airflow Operators Enroll to the course 8 HOURS HANDS-ON VIDEOS ACCESS ON UDEMY FOR ONLY 12. It is part of the Stackable Data Platform, a curated selection of the best open source data apps like Kafka, Druid, Trino or Spark, all working together seamlessly. In the Apache Airflow: The Operators Guide, you are going to learn how to create reliable, efficient and powerful tasks in your Airflow data pipelines. This is a Kubernetes operator to manage Apache Airflow ensembles. Apache Airflow Python DAG files can be used to automate workflows or data pipelines in Cloudera Data Engineering. With NativeEnvironment, rendering a template produces a native Python type.Documentation | Stackable Data Platform | Platform Docs | Discussions | Discord Airflow has many providers and there are a lot of operators already in the ecosystem but at times you may need to build your own operator to talk to external systems that there is no.

When render_template_as_native_obj is set to True. datetime ( 2021, 1, 1, tz = "UTC" ), catchup = False, render_template_as_native_obj = True, ) ( task_id = "extract" ) def extract (): data_string = '. Dag = DAG ( dag_id = "example_template_as_python_object", schedule = None, start_date = pendulum.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed